Is ChatGPT Getting Lazy?

Every conversation with ChatGPT comes with a hidden price tag and the meter is always running.

If you've been using ChatGPT lately, you might have noticed something weird. The AI that used to eagerly tackle any task now seems... tired. Sometimes it refuses to finish what you asked. Other times it gives you a template instead of the real answer. And occasionally, it just stops mid-sentence like it lost interest.

But here's the thing. The AI isn't actually getting lazy or developing an attitude. What's really happening is way more interesting. It's all about money and the massive challenge of keeping this technology running.

The Hidden Costs of a Conversation

Every time you ask ChatGPT a question, massive computers work in the background. They burn through electricity and processing power. All of that costs money.

OpenAI has a problem. Keep the AI smart, but don't go broke doing it.

The solution? Something called "tokens." These are tiny pieces of text. A long answer uses tons of tokens. A short answer uses fewer. More tokens mean higher costs.

What if ChatGPT's laziness is intentional?

Shorter responses save money. A lot of money. The AI is still smart. It's just being more careful about when to go all out. When you're serving millions of users daily, every saved token matters.

The High Cost of Being Smart

To understand why the model is being lazy, you have to look at the bill.

When you use a subscription service like Netflix, the cost to serve you is relatively static. It does not cost Netflix much more if you watch ten movies versus one movie.

LLMs are different. Every single word the model generates requires "inference." This requires massive amounts of computing power from expensive graphics processing units.

Think of it like a taxi meter that is always running.

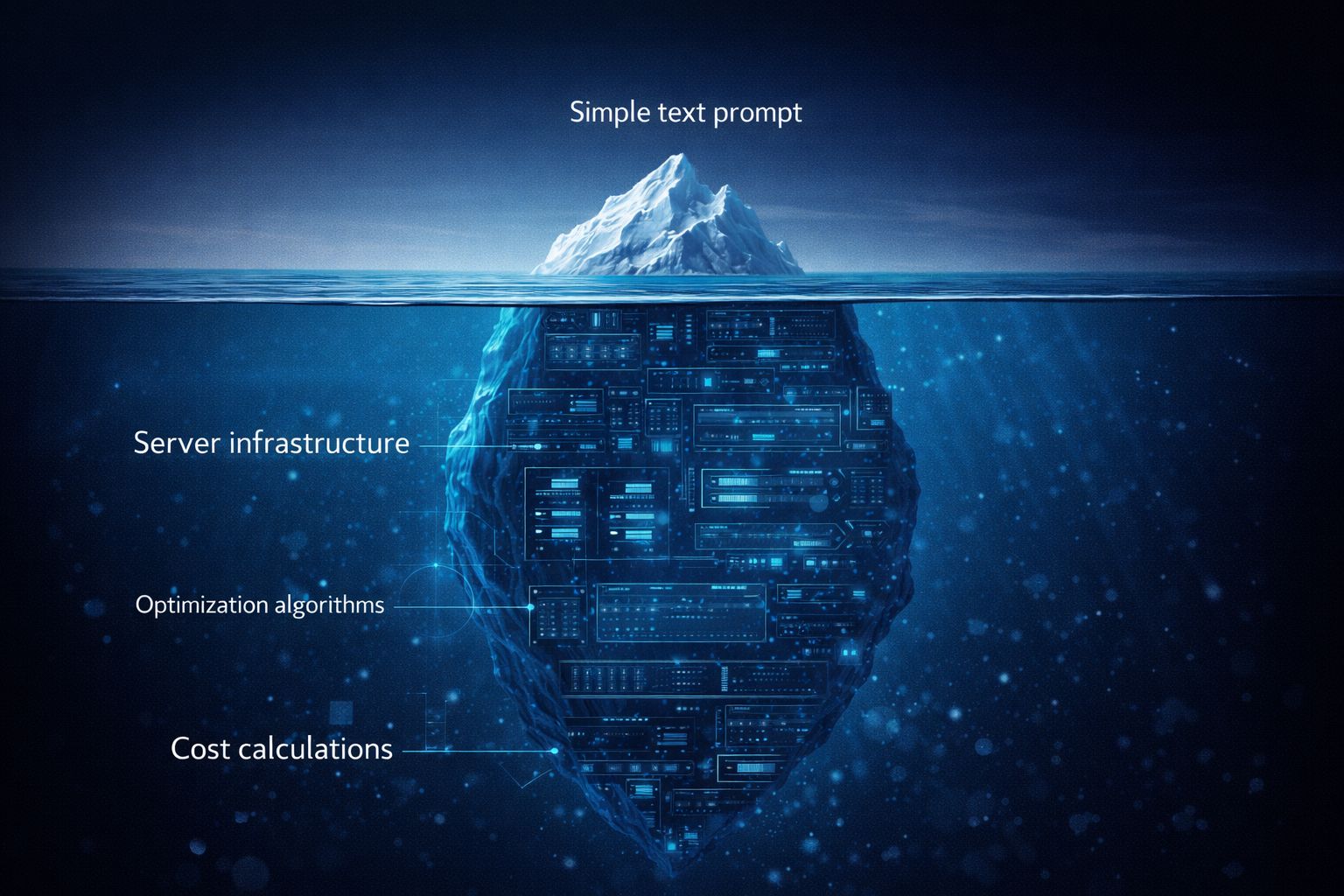

Behind every response lies a complex calculation of tokens, computation, and cost that most users never see.

When the model gives you a long, thoughtful, three-page answer, that is an expensive trip for the company. When it gives you a two-sentence summary, that is a cheap trip.

As these tools scale from one million users to one hundred million users, those costs compound. If a company can tweak the model to be 10% more concise, they save millions of dollars in server costs almost overnight.

Laziness is not a bug. For the provider, it is a feature.

Introducing the first AI-native CRM

Connect your email, and you’ll instantly get a CRM with enriched customer insights and a platform that grows with your business.

With AI at the core, Attio lets you:

Prospect and route leads with research agents

Get real-time insights during customer calls

Build powerful automations for your complex workflows

Join industry leaders like Granola, Taskrabbit, Flatfile and more.

The Challenge of Model Degradation

Another factor at play is the concept of model degradation. It may seem counterintuitive, but as language models are updated and fine-tuned, their performance on certain tasks can sometimes get worse. A study from Stanford and UC Berkeley found that GPT's accuracy on some math problems declined over a period of just a few months.

This can happen for a few reasons:

Overfitting: When a model is trained too much on specific examples, it can lose its ability to generalize to new, unseen problems.

Data Drift: The world is constantly changing, and the data used to train these models can become outdated.

Unintended Consequences: An update designed to improve one area might accidentally cause a decline in another.

OpenAI has acknowledged that model behavior can be unpredictable, and they are looking into the issue. This is a common challenge in the tech world, similar to how branding strategies must adapt over time to remain relevant.

The Limits of a Long Conversation

If you've ever had a long, detailed conversation with ChatGPT, you may have noticed it starts to "forget" things you mentioned earlier. This isn't a sign of a faulty memory, but a limitation of its "context window." The model can only hold a certain amount of information in its active memory at one time.

As a conversation gets longer, older parts of the discussion are pushed out of the context window to make room for new information. This can lead to responses that seem inconsistent or ignore previous instructions. While newer models have larger context windows, they are not infinite, and performance can still degrade as the conversation grows.

What This Means for Us

The "laziness" of ChatGPT isn't a sign of its impending obsolescence. Rather, it's a reflection of the inherent challenges in building and maintaining large-scale AI systems. As users, understanding these limitations can help us work more effectively with these powerful tools.

Here are a few things to keep in mind:

Be specific with your prompts. The clearer your instructions, the more likely you are to get the response you want.

Break down complex tasks. Instead of asking for a massive output all at once, try breaking your request into smaller, more manageable steps.

Start new conversations for new topics. This will help ensure the model has the full context of your request without being bogged down by irrelevant information from previous discussions.

The capability is still there, buried under layers of optimization, you just have to dig a little deeper to find it.

A lot is happening behind the scenes. We're in an awkward phase. Unlimited AI power is hitting real costs like server bills.

ChatGPT isn't getting dumber. It's getting cheaper to run.

The good news? The power is still there. You just need to ask better. Be specific. Tell it exactly what you want.

The capability didn't vanish. It's hiding under cost cuts. Dig deeper and you'll find it. For more insights on navigating the digital landscape, be sure to explore our other articles.

The Future of Shopping? AI + Actual Humans.

AI has changed how consumers shop, but people still drive decisions. Levanta’s research shows affiliate and creator content continues to influence conversions, plus it now shapes the product recommendations AI delivers. Affiliate marketing isn’t being replaced by AI, it’s being amplified.

Until next week,

Better Every Day